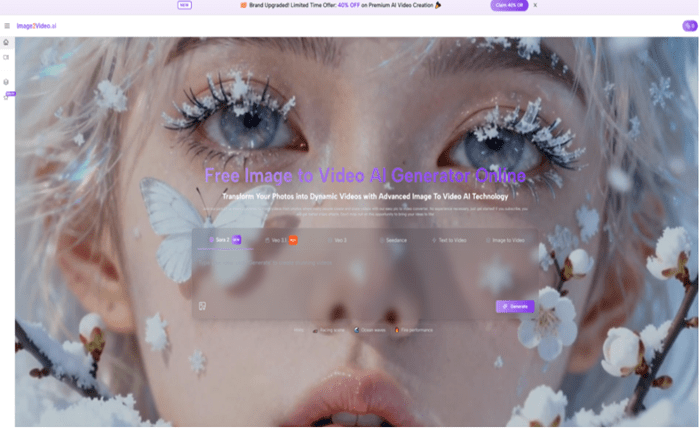

There is a subtle shift happening in how visual content is perceived. Images are no longer treated as complete units. They are increasingly seen as fragments—starting points for something that should evolve over time. For many creators, the problem is not generating ideas, but translating those ideas into motion without stepping into complex editing environments. That gap is where tools like Image to Video AI begin to reshape expectations.

What becomes interesting is not just the ability to animate an image, but the change in how users think about images themselves. Instead of asking “is this image good enough,” the question becomes “what could this image become if it moved.”

Why The Role Of Images Is Changing In Creative Workflows

Images Are Becoming Inputs Instead Of Endpoints

In earlier workflows, an image was often the final output. Now, it functions more like raw material. The expectation is that it can be extended, transformed, or reinterpreted.

Motion Defines Perceived Value

A static visual might communicate information, but motion introduces narrative. Even minimal movement—like a slow zoom or subtle perspective shift—can change how content is perceived.

Speed Of Creation Has Become A Priority

In many contexts, especially social media and marketing, producing content quickly matters more than perfecting it. This changes the tools that feel practical.

How The System Treats Images Differently

Images As Structured Data

From what I’ve observed, the system doesn’t treat an image as a flat picture. It analyzes elements like foreground, background, and implied depth to determine how motion can be applied.

Language As Instruction

Instead of adjusting sliders or keyframes, users describe intent. This shifts control from technical precision to conceptual clarity.

Model Selection As Style Choice

Different models appear to interpret motion differently. Some outputs feel more cinematic, while others lean toward stylized animation.

The Practical Workflow In Context

Step 1 Upload A Base Image

Users provide a JPEG or PNG file. The clarity and composition of this image influence how stable the final output appears.

Step 2 Describe The Intended Motion

A text prompt defines how the image should evolve. This can include camera movement, emotional tone, or environmental changes.

Step 3 Generate The Video

After selecting a model, the system processes the request. In my testing, results typically appear within a few minutes.

Comparing Creative Entry Points

| Entry Point | Description | Creative Effort | Technical Skill |

| Static Image | Single frame output | Low | None |

| AI Motion Generation | Prompt-based animation | Moderate | Low |

| Traditional Editing | Manual video production | High | High |

This table shows that the tool doesn’t replace existing methods—it introduces a new middle ground.

Where This Approach Feels Most Natural

Concept Testing

Instead of building a full video, creators can quickly test whether an idea works visually.

Visual Story Prototyping

Short motion clips can act as drafts for larger projects.

Content Expansion

A single image can generate multiple variations, each with different motion styles.

Limitations That Shape Its Use

Ambiguity In Interpretation

Because prompts are descriptive, results may vary. The system interprets rather than executes exact instructions.

Dependence On Image Quality

Images with clear subjects and depth tend to produce more convincing motion.

Limited Scene Complexity

The system works best with single-scene transformations rather than complex narratives.

How This Changes Creative Thinking

One of the more interesting effects is how it shifts decision-making. Instead of planning every detail, users iterate quickly. They generate, observe, adjust, and repeat.

This creates a feedback loop that feels closer to experimentation than production.

From Single Image To Multi-Clip Narrative

Over time, users often begin to chain outputs together. This is where the idea of Photo to Video becomes more relevant—not as a single feature, but as a workflow pattern.

By enerating multiple clips from different images or prompts, a broader sequence can be assembled.

Why This Matters Beyond Convenience

The significance is not just speed. It is accessibility. By lowering technical barriers, more people can explore motion-based storytelling.

From my perspective, the outputs are not always precise, but they often capture the essence of what the user intended.

What This Suggests About Future Creative Tools

If this direction continues, we may see:

- More predictable prompt interpretation

- Greater control through descriptive language

- Integration with audio and multi-scene structures

But even now, the shift is clear: images are no longer static endpoints. They are starting points for motion.

And that changes how creators think about what they produce.